- News and Blog

SenseTime Releases Open-Source Multimodal AI Model "INTERN 2.5"

March 15, 2023 – SenseTime released its universal multimodal and multi-task model, "INTERN 2.5". This model achieves multiple breakthroughs in its ability to process multiple modalities and tasks. Its exceptional cross-modal open task processing capability provides efficient and precise perception and understanding for general scenarios such as autonomous driving and robotics. The model was first jointly released by SenseTime, Shanghai Artificial Intelligence Laboratory, Tsinghua University, The Chinese University of Hong Kong, and Shanghai Jiao Tong University in November 2021.

The visual core of the "INTERN 2.5" is supported by the InternImage-G large-scale vision foundation models. With 3 billion parameters, "INTERN 2.5" achieved a Top-1 accuracy rate of 90.1% based on ImageNet, using only publicly available data. It is not only the most accurate and largest open-source model in the world but also the only model in the object detection benchmark dataset COCO that exceeds 65.0 mAP. Currently, the "INTERN 2.5" universal multimodal model is already open-sourced on OpenGVLab (https://github.com/OpenGVLab/InternImage).

AI technology’s development is currently facing a large number of challenges in cross-modal tasks. The newly released "INTERN 2.5" is dedicated to constructing a universal multimodal and multi-task model, which can receive and process various modal inputs, adopt a unified model architecture and parameter processing for various tasks, as well as promote cooperation between different modalities and tasks.

"INTERN 2.5" Advances Artificial General Intelligence (AGI) and Boosts Perception Capability in General Scenarios

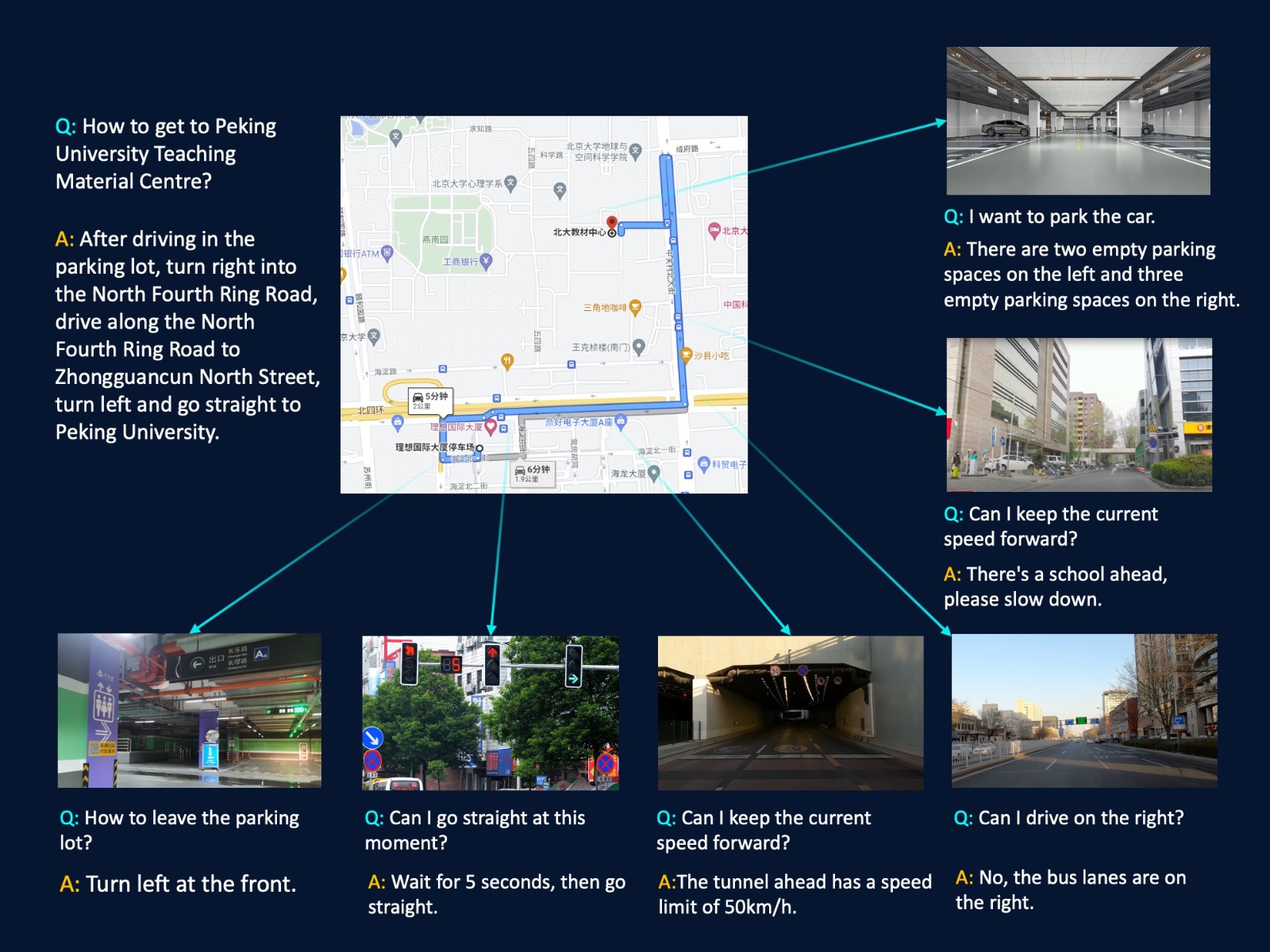

"INTERN 2.5" demonstrates strong understanding and problem-solving abilities, including but not limited to image description, visual question-answering, visual reasoning, and text recognition. For example, in general scenarios such as autonomous driving, "INTERN 2.5" can assist in handling various complex tasks, including efficiently and accurately assisting vehicles judge traffic signal status, road signs, and other information.

Another important direction for AI-generated content (AIGC) is "text-to-image". "INTERN 2.5" can generate high-quality, natural, and realistic images by using a diffusion model generation algorithm. For example, "INTERN 2.5" can generate various realistic road traffic scenes, such as busy city streets, crowded lanes on rainy days, and running dogs on the road, thus assisting in the development of autonomous driving systems and continuously improving the perception ability of Corner Case scenarios.

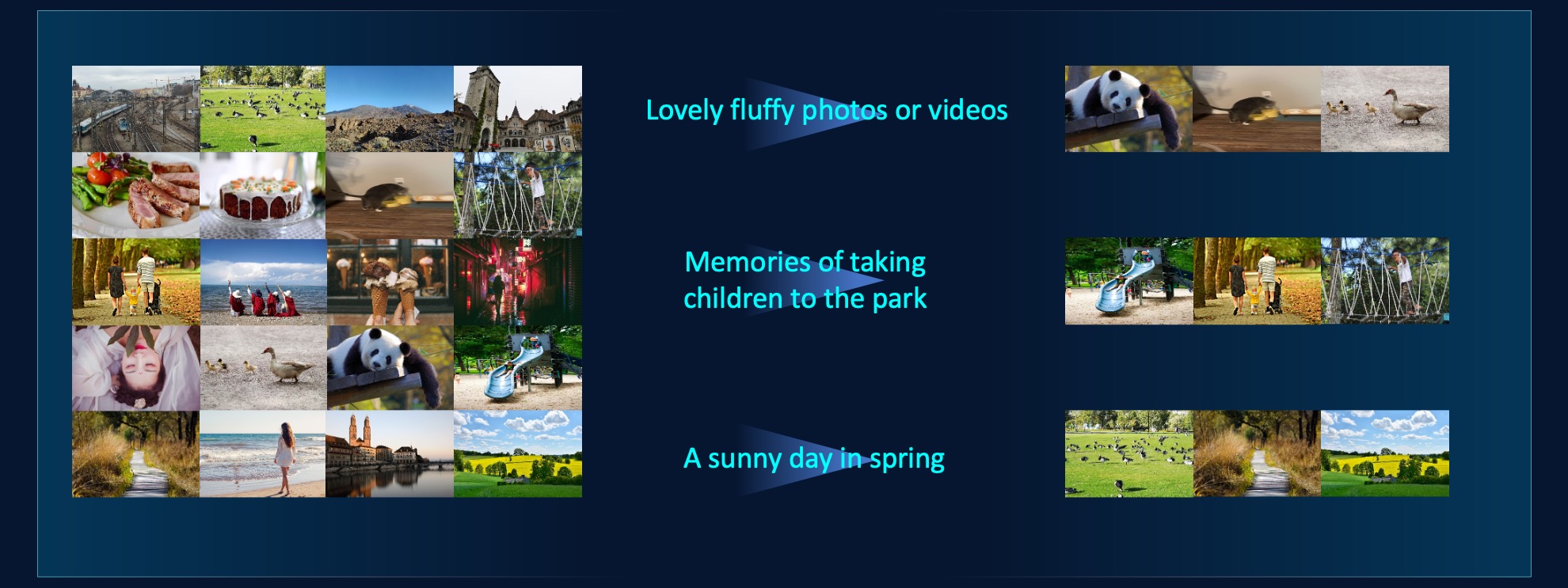

"INTERN 2.5" can also quickly locate and retrieve relevant images, helping users find the required image resources conveniently and quickly. For example, it can find the relevant images specified by the text in the album or find the video segments that are most relevant to the text description.

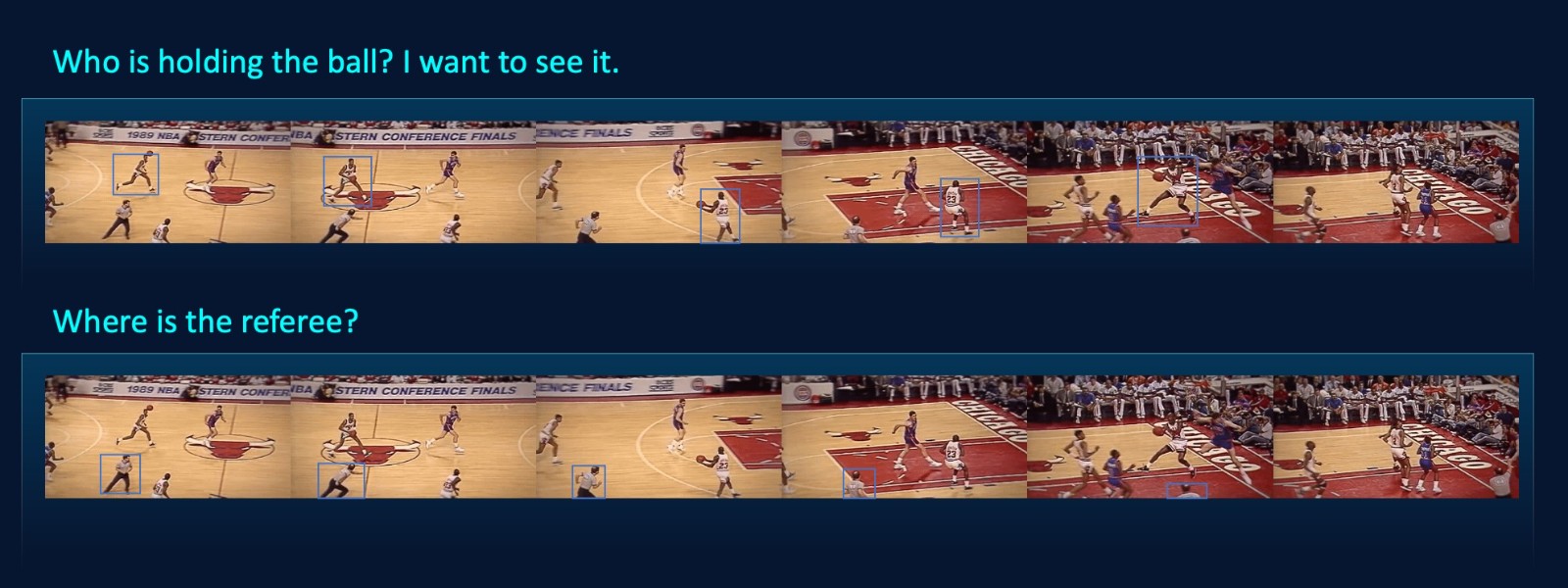

In addition, "INTERN 2.5" supports object detection and visual positioning in open-world videos or images, finding the most relevant objects based on the text.

As "INTERN 2.5" continues to learn and evolve, we remain committed to promoting breakthroughs in universal multimodal and multitask model technology, promoting AI innovation and application, and contributing to the growth of AI academia and industry.

Return

Return