- News and Blog

SenseTime Unveils SenseNova 4.0,Bringing Novel AI Experience

SenseTime releases SenseNova 4.0, a multi-dimensional upgrade of the foundation model sets. SenseNova 4.0 demonstrates more advanced knowledge coverage with greater capabilities in reasoning, long text comprehension, numerical inference, code generation, and multimodal interactions. SenseNova's latest large language model ("LLM") general version SenseChat V4 performs neck-and-neck with GPT-4 and surpasses GPT-3.5 in overall performance. (API application website: https://platform.sensenova.cn/)

SenseTime also introduces SenseChat Function Call & Assistants API, its first multimodal tool-calling API. By bridging large models with application service tools, it significantly lowers the barrier for developers to utilize large models. Building upon the SenseChat Function Call & Assistants API, SenseTime launches data analysis tool, Office Raccoon, which transforms large model capabilities into practical applications.

SenseTime's SenseNova foundation model sets unlock a wide array of AI applications in an efficient and cost-effective manner, such as SenseChat-DataAnalysis V4 for office context, SenseChat-Medical V4 for healthcare sector, SenseChat-Vision V4 for autonomous driving and industrial scenarios, and SenseMirage V4 for creative use.

SenseTime's SenseChat LLM has already accelerated the intelligent transformation of over 500 customers across many vertical industries, including finance, mobile phone, healthcare, automotive, real estate, energy, media, and industrial manufacturing.

SenseTime's latest foundation model suite, along with its products and tools, paves a pivotal pathway towards the realization of artificial general intelligence ("AGI") by empowering the concept of "Large Model+" across various scenarios and industries and expanding the horizons of large model applications.

Enriched Foundational Model Sets with On-demand AI Capabilities

SenseNova 4.0 offers a variety of flexible API interfaces and services. Developers can easily maneuver SenseNova's diverse skillsets to implement a myriad of AI applications more cost-effectively and efficiently.

SenseNova's enhancement is underpinned by its LLM upgrade. The latest LLM general version SenseChat V4 expands its application scope by supporting 4k, 32k, 128k tokens, and demonstrates significant enhancements in comprehending knowledge, reading, and long text, reasoning, mathematics, and coding. Its overall performance closely matches that of GPT-4 while outperforming in long text comprehension and coding categories. SenseChat V4 also achieves a one-pass rate of 75.6% on the authoritative HumanEval benchmark, as compared to 74.4% of GPT-4.

SenseChat V4’s overall performance closely matches that of GPT-4, according to the OpenCompass LLM evaluation platform.

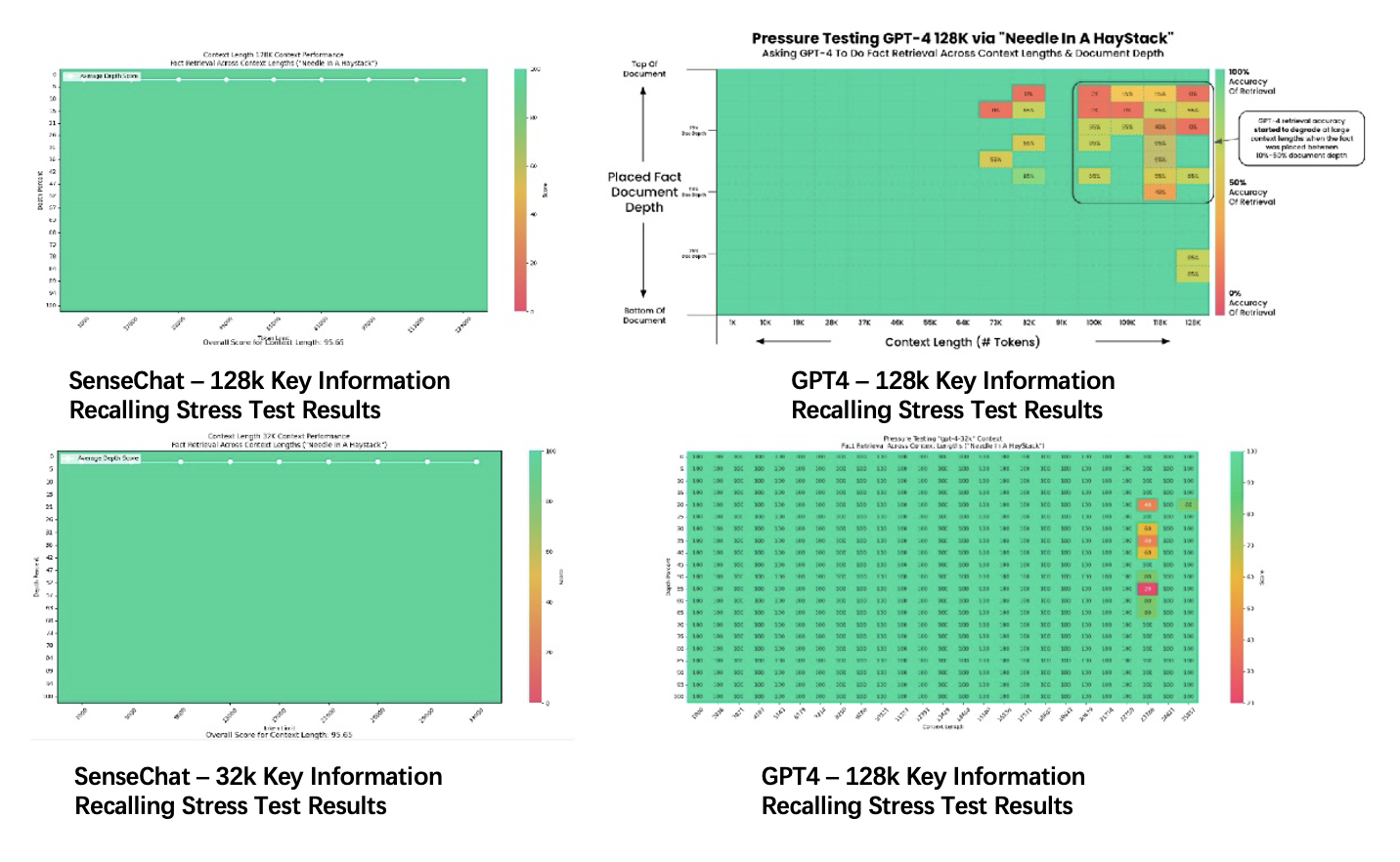

The above figures demonstrate the accuracy in recalling key information at varying context lengths (horizontal axis) and different points in the context (vertical axis) for SenseChat-128k and SenseChat-32k. Red indicates lower recall accuracy, while green represents a higher recall rate. The results reveal that SenseChat V4 maintains a near-perfect recall success rate when the context length extends to 128k or 32k, as opposed to less perfect results of GPT4-128k and GPT4-32K.

SenseNova's new code interpreter, SenseChat-DataAnalysis V4, surpasses GPT-4 with an accuracy rate of 85.71% in data analysis scenarios, comprising of more than 1,000 questions. It demonstrates comprehension of complex and multiple forms and files; along with common data analysis tasks such as data cleaning, operation, comparative analysis, trend analysis, predictive analysis, and visualization. It can be applied in various scenarios, including financial analysis, business analysis, sales forecasting, market analysis, and macro analysis.

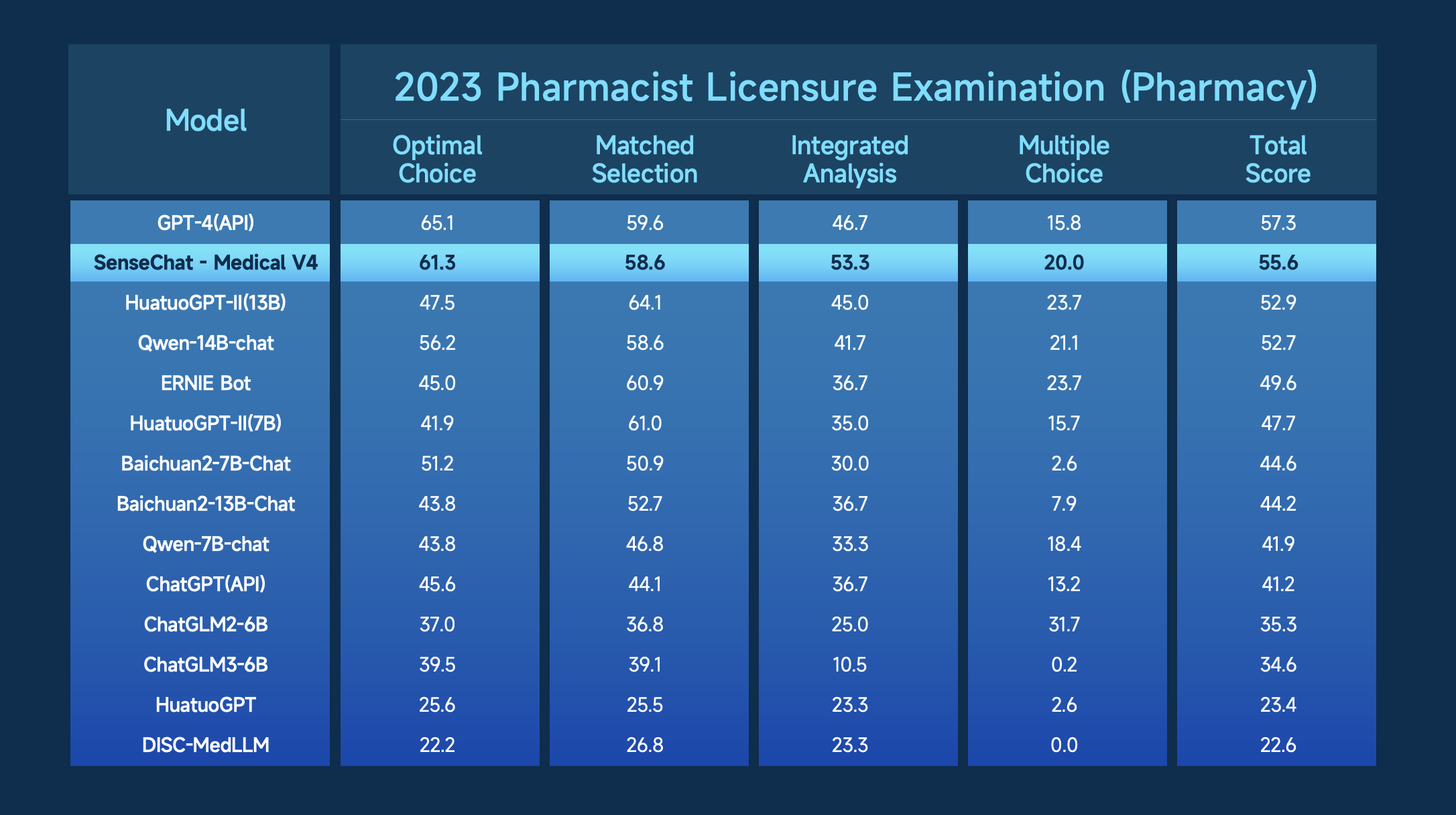

SenseChat-Medical V4, SenseTime's upgraded medical LLM, boasts stronger capabilities for multi-round dialogues, context understanding, and tool invocation. It can handle professional medical Q&A, complex inference for medical tasks, intelligent interpretation, and interactive Q&A of multimodal medical documents. SenseChat-Medical V4's overall performance closely matches that of GPT-4 and ranks second in two authoritative industry evaluations: the 2023 Pharmacist Licensure Examination LLM evaluation and the Chinese medical LLM evaluation benchmark MedBench. In the former evaluation, it even outperforms GPT-4 in two categories.

SenseChat-Medical V4 ranks second in the overall score of 2023 Pharmacist Licensure Examination LLM evaluation and outperforms GPT-4 in two categories.

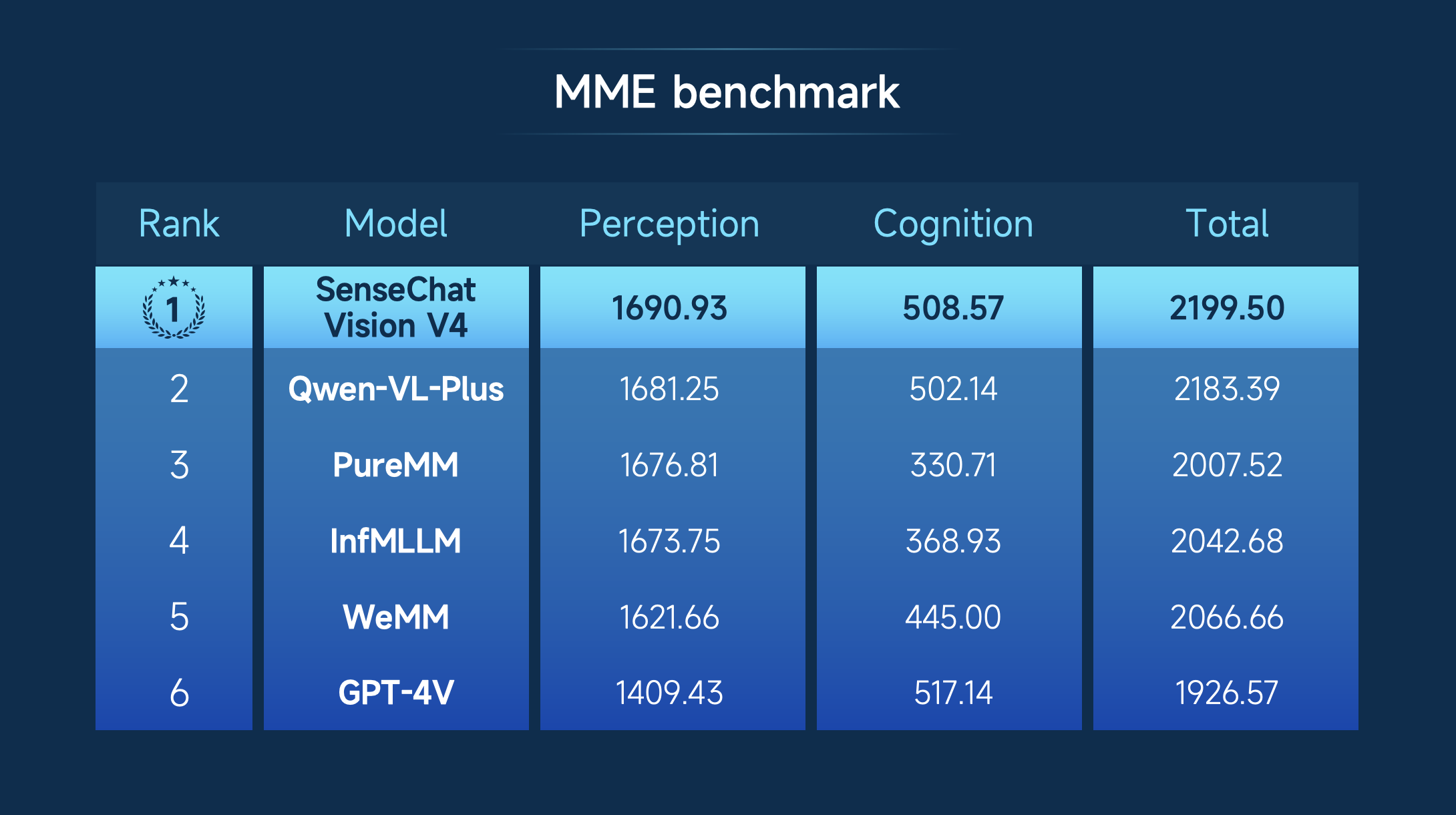

Multimodal AI represents a pivotal phase in the evolution of large AI models. SenseChat-Vision V4, the large multimodal model ("LMM") powered by 30 billion parameters and world-leading image and text comprehension abilities, tops the comprehensive score of MME Benchmark (2199.5 vs GPT-4's 1926.57), an authoritative test for LMMs. SenseChat-Vision V4 enables a wider array of industrial upgrades through practical applications, such as intelligent driving, smart cabin, and the power industry.

The authoritative MME Benchmark evaluates LMMs across 14 dimensions, such as positioning, celebrity recognition, scenic spot recognition, OCR, and mathematical calculations.

The upgraded text-to-image generation model, SenseMirage V4, can produce cinematic-grade posters with enhanced context, texture and details, owing to its increased parameters to 10 billion and the optimization of mixture of text experts and spatial-aware CFG algorithms, resulting in a marked advancement in prompt comprehension and image rendering capabilities. The newly introduced SenseMirage-Turbo V4 incorporates the adversarial distillation algorithm and the inference time is 10 times faster than the V4 basic version.

The upgraded SenseMirage V4 enables one-click cinematic-grade poster generation.

The First Multimodal Function Call & Assistants API - Dedicated Development Assistant in Large Model Era

To empower developers and related industries to utilize large models more conveniently and efficiently, SenseTime launches the SenseChat Function Call & Assistants API. Developers can leverage the highly flexible and customizable tool-calling framework within the SenseChat Function Call & Assistants API, such as network search, code interpretation, image and text Q&A, and text-to-image generation. This allows developers to utilize the SenseNova product suite for various industry uses.

This pioneering multimodal tool-calling API facilitates multimodal interactions by combining text and images, and enables the presentation of coding execution for data analysis. The goal is to tackle more complex problems and integrate AI capabilities into various applications in a more efficient and straightforward manner.

SenseNova Empowers Industrial Upgrades and Accelerates Innovative Implementations

With the transformative effect of large models on human-machine interactions and the iterative power of SenseNova in reshaping product applications, SenseTime launches Office Raccoon to meet the data analysis needs.

Office Raccoon is a user-friendly data analysis tool that requires no coding or complicated operations. By integrating SenseNova's intent recognition, logic understanding, and code generation capabilities, Office Raccoon automatically converts raw data into meaningful analysis and visualization results through natural language input. It is well-suited to meet the data analysis demand in China, given SenseNova's strong Chinese language comprehension capabilities.

These products represent SenseTime's many endeavors in large model technology applications. Since the launch of SenseNova on April 10 last year, it has attracted over 3,000 enterprise users spanning industries, including internet, gaming, culture and tourism, education, healthcare, finance, and programming.

SenseTime is committed to making large models more accessible, expanding AI application scenarios, and ensuring effective large model utilization across industries. The company will continue to advance SenseNova to unlock innovative and front-loading implementations, empower a wider array of scenarios and industries, and bring the AI ecosystem as a whole towards the era of AGI.

Return

Return